For the better part of a decade, the “DevOps movement” has focused on a single, primary goal: velocity. We built CI/CD (Continuous Integration/Continuous Deployment) pipelines to automate the path from a developer’s keyboard to a production environment, effectively removing the manual hand-offs that used to define the software release cycle. However, as we move deeper into the era of microservices, distributed architectures, and hybrid cloud environments, we are reaching the limits of traditional, script-based automation.

The reality on the ground for most Senior Product Managers and CTOs is that pipelines have become “brittle.” We’ve replaced manual labor with complex, hard-coded scripts that require constant maintenance. When a build fails, an engineer still has to spend hours sifting through logs to find the “why.” This is where the next evolution of DevOps—often referred to as AIOps—enters the frame. By integrating Artificial Intelligence into the CI/CD pipeline, we aren’t just automating tasks; we are automating the decision-making process itself. This shift is critical as organizations realize that manual oversight of modern cloud-native environments is no longer humanly possible.

The Complexity Crisis in Modern Product Delivery

Traditional CI/CD pipelines operate on “if-then” logic. They are deterministic. If a test passes, the build moves forward; if it fails, it stops. This worked well when applications were monolithic and predictable. Today, however, a single deployment might interact with dozens of APIs, third-party services, and transient cloud resources. In this environment, failures aren’t always binary. We see “flaky tests,” intermittent network latency, and environment-specific bugs that traditional automation is ill-equipped to handle.

This complexity creates a “cognitive tax” on engineering teams. In a recent research by Gartner, by 2026, 80% of software engineering organizations will establish platform teams as internal providers of reusable services, tools, and components for software delivery. At the heart of this transition is the realization that human engineers cannot keep pace with the sheer volume of data generated by modern telemetry. AI improves CI/CD pipelines by acting as a high-speed analytical layer, identifying patterns in deployment data that would take a human developer hours or days to uncover. Without this analytical layer, the “Continuous” in CI/CD becomes a misnomer, interrupted by frequent manual interventions and troubleshooting marathons.

Intelligent Integration: Moving Beyond Scripted Builds

The “CI” in CI/CD is where the most significant developer friction occurs. We’ve all seen the “broken build” notifications that halt productivity. AI-driven DevOps tools are changing the way we approach Continuous Integration by introducing predictive capabilities into the build phase. Instead of running every single test in a massive suite—which can take hours—AI models can analyze which parts of the codebase were actually touched and predict which tests are most likely to fail.

This “Predictive Test Selection” reduces feedback loops from hours to minutes. Microsoft Research has demonstrated that machine learning can successfully identify which tests are redundant for specific changes, drastically reducing resource consumption. By applying machine learning to historical test data, these systems can also identify “flaky tests” those frustrating tests that fail and pass intermittently without changes to the code.

An AI-enhanced pipeline can automatically flag these tests for quarantine, ensuring that the development team isn’t distracted by false positives, thereby maintaining a high level of trust in the automation itself. This level of precision allows teams to maintain a high “developer experience” (DevEx), which is increasingly tied to overall organizational productivity.

FURTHER READING

➤ What Is DevOps? How DevOps Works and Why It Matters

Shifting Quality Left with Generative AI

Beyond just running tests, AI is now being used to write them and analyze the code itself before it even hits the repository. Generative AI and Large Language Models (LLMs) are being integrated into the “inner loop” of development. Rather than relying on simple linting or static analysis that only catches syntax errors, AI-powered tools can now identify logical flaws, security vulnerabilities, and even architectural deviations.

IBM has highlighted how AI-infused DevOps allows for “intelligent remediation,” where the system doesn’t just find a bug but suggests the specific code change required to fix it. This proactive approach significantly reduces the time spent in the “Code-Review-Fix” cycle. For a Product Manager, this means that the “Definition of Done” is met more consistently, and features move from the backlog to the staging environment with much higher predictability. It transforms the CI pipeline from a passive gatekeeper into an active contributor to code quality.

Continuous Deployment and the Rise of Autonomous Rollouts

While CI focuses on the health of the code, Continuous Deployment (CD) focuses on the health of the system. This is where AI truly shines in a production environment. The traditional approach to deployment—the “Big Bang” release—is dead. It has been replaced by canary releases and blue-green deployments. However, managing these rollouts manually or via static scripts is an operational nightmare that often leads to “deployment anxiety.”

AI improves the “CD” portion of the pipeline through intelligent automated rollbacks and canary analysis. Consider a scenario where a new microservice is deployed to 5% of your user base. A standard automated script might look at CPU usage and error rates. If they stay below a certain threshold, it proceeds. But what if the error rate is fine, yet the “time-to-purchase” metric for those users has increased by 15%? Traditional DevOps scripts won’t catch that business-level degradation.

McKinsey & Company reports that high-performing organizations are increasingly using AI to bridge the gap between technical metrics and business outcomes. An AI-driven deployment engine monitors high-dimensional data—including business KPIs and deep observability metrics—to determine the “blast radius” of a change. If the model detects a statistical anomaly that correlates with the deployment, it can trigger an autonomous rollback in milliseconds. This level of precision is exactly what we focus on at Doshby; we help organizations move from “automated” to “autonomous” delivery, ensuring that software reaches the user without compromising system stability.

Improving MTTR with AI-Driven Observability

One of the most critical metrics for any product organization is Mean Time to Recovery (MTTR). When things go wrong in production—and they eventually do—the clock starts ticking on both lost revenue and brand reputation. The traditional DevOps response involves “War Rooms,” manual log searching, and hypothesis testing. AI fundamentally alters this workflow by providing automated root cause analysis (RCA).

By training on historical incident data and real-time telemetry, AI models can correlate a spike in latency in one service with a configuration change in another. Instead of an alert that says “Service X is down,” an AI-enhanced pipeline provides an insight: “Service X is failing because of a database connection timeout introduced by deployment #402.” According to PwC’s Global AI Study, the greatest economic gains from AI will come from automated improvements in productivity and reliability.

This shift from reactive monitoring to proactive observability is the hallmark of a mature DevOps organization. It allows senior leadership to reallocate engineering hours from “firefighting” to “feature building.” This isn’t just a technical upgrade; it’s a strategic business move. When your infrastructure can self-diagnose and, in some cases, self-heal, your engineering team is freed from the burden of operational toil.

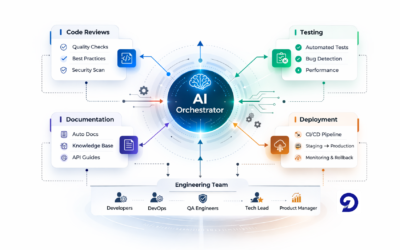

Visualizing the AI-Enhanced Pipeline

To understand the difference, one must visualize the workflow. A traditional pipeline is a linear assembly line. An AI-enhanced pipeline is a multi-layered feedback loop that learns from every execution.

- Hero Image Suggestion: A sophisticated, high-contrast visual showing a traditional linear pipeline being “supercharged” by a glowing neural network overlay. This represents the intelligence layer sitting atop the existing automation stack.

- Workflow Diagram: A teaching tool showing three distinct layers:

- The Execution Layer (Jenkins/GitHub Actions/GitLab).

- The Intelligence Layer (AI Impact Analysis/Anomaly Detection).

- The Feedback Layer (Automated Backlog updates and developer alerts).

- Comparison Chart: A table comparing “Static Automation” (Manual thresholds, high maintenance, binary logic) vs. “AI-Driven Automation” (Dynamic thresholds, self-learning, contextual logic).

The Business Case: Why This Matters to the C-Suite

For founders and operations leaders, the investment in AI-driven DevOps isn’t just about technical vanity. It is about market responsiveness and cost control. In a hyper-competitive market, the bottleneck is rarely the developers’ coding speed—it’s the “path to production.” If your deployment process is manual and error-prone, you are essentially paying a “complexity tax” on every single feature you ship.

IDC has forecasted that global spending on AI will continue to see double-digit growth as companies scramble to integrate these efficiencies. AI reduces the “wait time” in the software development lifecycle (SDLC). When you reduce the friction of deployments, you increase the frequency of deployments. High-performing teams, as defined by DORA (DevOps Research and Assessment) metrics, deploy more frequently and have lower change failure rates. AI is the lever that allows a mid-sized company to achieve the deployment frequency of a tech giant without needing an army of Site Reliability Engineers (SREs).

Implementing the Transition: A Pragmatic Approach

Moving toward AI-driven DevOps does not require a “rip and replace” of your existing Jenkins or GitHub Actions workflows. Instead, it’s about layering intelligence onto your current stack. As a technical strategist, I always recommend a phased approach to avoid overwhelming the team or introducing new variables too quickly.

- Start with Observability: You cannot apply AI to data you aren’t collecting. Ensure your stack is providing deep telemetry. Use platforms that support OpenTelemetry standards to ensure data portability.

- Integrate Smart Testing: Use AI tools to analyze test failures and categorize them into “real bugs” vs. “environment issues.” This immediately reduces developer frustration.

- Automate the “Go/No-Go”: Implement automated canary analysis that looks at more than just 200 OK responses. Look for tools that allow you to define “Service Level Objectives” (SLOs) that the AI can monitor during a rollout.

At Doshby, we specialize in this specific transformation. We understand that AI integration isn’t just about the tools—it’s about the strategy behind them. Whether it’s optimizing your CI/CD pipelines or integrating AI into your core product offering, the goal is always to build a more resilient, scalable, and profitable software delivery engine. We look at your delivery pipeline as a product in itself, one that requires constant optimization and a business-first mindset.

Conclusion: The Future is Autonomous

The era of manual, script-heavy DevOps is drawing to a close. As the scale of our software systems exceeds the capacity for human oversight, AI is no longer a luxury—it is a requirement for survival. By improving CI/CD pipelines with machine learning and predictive analytics, organizations can finally realize the original promise of DevOps: the ability to deliver high-quality software at the speed of business.

The transition from scripted automation to intelligent, autonomous delivery is a journey of maturity. It requires a shift in mindset from “controlling every step” to “governing the AI that manages the steps.” For the modern CTO, this is the path to true operational excellence.

Is your delivery pipeline struggling to keep up with your growth?

At Doshby, we partner with forward-thinking companies to implement AI-driven automation and software strategies that drive real business results. We don’t just provide tools; we provide the strategic roadmap to transform your engineering culture. Contact us today for a strategic consultation on how we can modernize your CI/CD infrastructure and accelerate your path to production.